May belonged to Google I/O. The announcements stacked up faster than the keynote could explain them, and most of them mattered: a unified AI filmmaking platform, a video model that finally understands physics and sound, smart glasses with Gemini wired in, and a try-on tool that lives inside Search. Around it, smaller signals from wearables, installation art and creator gaming filled in the rest of the picture.

Eleven things from May worth your time

Google I/O 2025

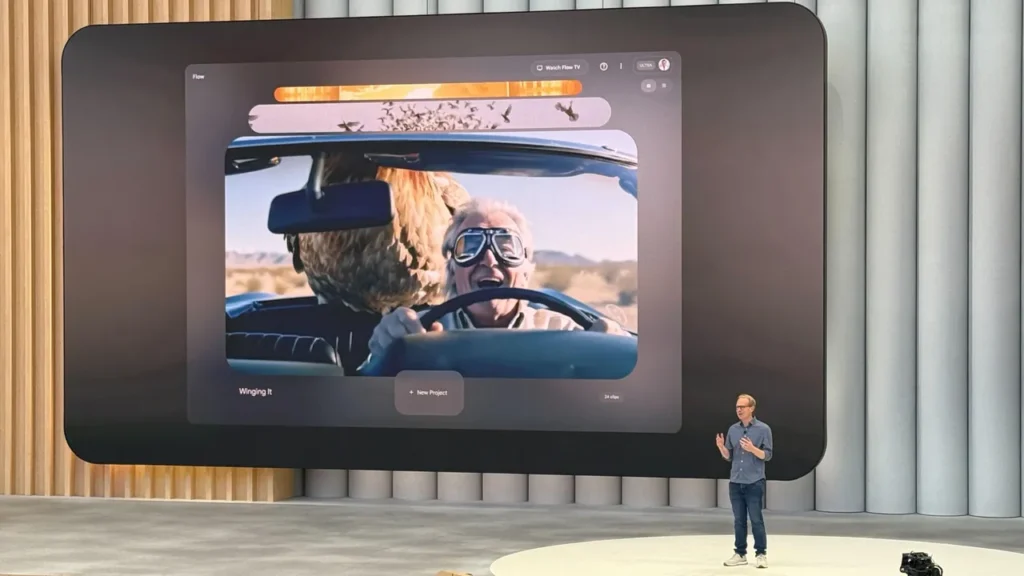

1. Google Flow, an AI filmmaking platform

Flow bundles Veo (video), Imagen (image) and Gemini (text) into one filmmaking interface, with a Flow TV companion that lets you browse 600+ films and read the exact prompts that produced them. The transparency is the interesting bit. Most AI tooling hides the prompt; Flow puts it next to the output so teams can actually learn how a result was made. For brand teams it collapses the gap between "we have an idea" and "here's a film of the idea" from days to an afternoon. Flow.

2. Veo 3, physics-aware video generation

DeepMind's Veo 3 generates video with believable physics, native sound effects and dialogue from a single prompt. The headline demos are the water sims, but the quieter breakthrough is multi-modal sync: visuals, audio and motion staying aligned across a clip without manual passes. This is the first text-to-video output we've watched without immediately reaching for the "yes, but" caveats. It still won't make content good on its own, you need the rest of the craft, but it's a serious tool now rather than a curiosity. Veo 3.

3. Android XR glasses with Gemini

Google teased smart glasses with Gemini wired in at the OS level: real-time translation, environmental understanding, hands-free contextual prompts. We've been calling wearables the next platform shift for a while, and this is the strongest signal yet that ambient, glasses-based information layers are heading toward something normal people will wear. For brands that means thinking about persistent overlays in retail, festival and tourism contexts without an app download in the path.

4. AI Try-On inside Google Search

Google's generative try-on now lives natively inside Search, visualising clothing across body types and poses with no app, no filter, no extra surface. The shift is where it sits, not what it does. Once visual try-on becomes a default expectation in the same place people already shop, retail brands without an answer for it are going to look behind. Worth testing conversion lift against standard product pages now rather than later. Try-On update.

Wearables beyond Google

5. Reebok x Innovative Eyewear, AI sport glasses

Reebok's smart eyewear is built for the workout rather than the boardroom: outdoor-tuned amplifiers, high-fidelity speakers, real-time coaching cues. Fitness wearables sliding into performance tooling is the interesting trajectory here. The biometric and audio streams these things produce are exactly the data you'd want feeding into a sports activation or branded coaching experience.

6. Ray-Ban capture plus Runway edit

FOOH (fake out-of-home) work usually means heavy 3D, animation and compositing. A new workflow is collapsing it into something simpler: capture passively with Meta Ray-Bans, then enhance and reframe with generative editing in Runway. Record now, edit later, with the AI doing the heavy compositing pass. We've shipped FOOH work before, and the appeal here is how much friction it strips out of the production timeline without giving up the cinematic finish.

Immersive spaces and visual storytelling

7. Height-based illusion installations

A run of viral installations using transparent floor surfaces and projected content underneath, with visitors stood on raised platforms looking down into a depth that isn't really there. No interactive system, no sensors, no head tracking. Just spatial design and perception doing the work. A useful reminder that emotional impact and technical complexity aren't the same axis, and that a clever floor can outperform a wall of LEDs if the brief is about presence rather than spectacle.

Interactive spaces and gesture tracking

8. Real-time light and sound installations

A small-scale piece pulling lasers, lighting, audio and LED-embedded objects into one synchronised system, where everything reacts in lockstep. The technical overhead is modest, the output isn't. We keep coming back to this kind of multi-element setup for tactile, intimate event spaces where presence and movement need to drive the visuals rather than a button press.

Commerce gets visual

9. HYPERVSN's mobile hologram truck

HYPERVSN put their holographic display tech on the back of a truck and drove it through cities. We've worked with HYPERVSN on plenty of activations, and the appeal here is portability — high-precision holographic displays that can land in places without screen real estate, do the spectacle, and roll on. Useful for product launches and touring stunts where the surface matters as much as the content. HYPERVSN truck.

10. Photoreal creator worlds in Roblox

Roblox creators are now building environments that hold their own against AAA studio output, with iteration cycles measured in days rather than quarters. The "YouTube-ification" of gaming is a good way to read it: high-engagement worlds being built outside the studio system, by creators with their own audiences. For youth-facing brands the question stops being "should we be on Roblox" and becomes "who's the creator we'd actually want to build with."

Industry commentary and trends

11. Browser-native 3D digitisation

Detailed 3D scans with spatial annotations and real parallax, running in a browser tab with no download path. The fidelity is the surprise; the delivery surface is the unlock. Stadium tours, locker rooms, museum exhibits, heritage sites, behind-the-scenes product walks — anything you'd want someone to step inside without an app install. Photoreal exploration with editorial layers on top.

12. Generative interactive web

Web tech has quietly caught up with the kind of organic, fluid visuals that used to need a downloadable app or a heavy WebGL build. Code-driven, asset-light, responsive across devices. A live, generative microsite is now a reasonable answer for briefs that need visual presence without the load times, and it's a useful reminder that the browser is still where the broadest audience lives.

What we're taking into June

The throughline this month is accessibility. Filmmaking, try-on, photoreal worlds, web visuals — all of them got cheaper, faster, or closer to the surface where audiences already are. Google I/O set the pace, but the real story is how quickly the rest of the toolkit is rearranging itself around it. Get in touch if any of this maps to something you're trying to build.