Shaping AI with your hands

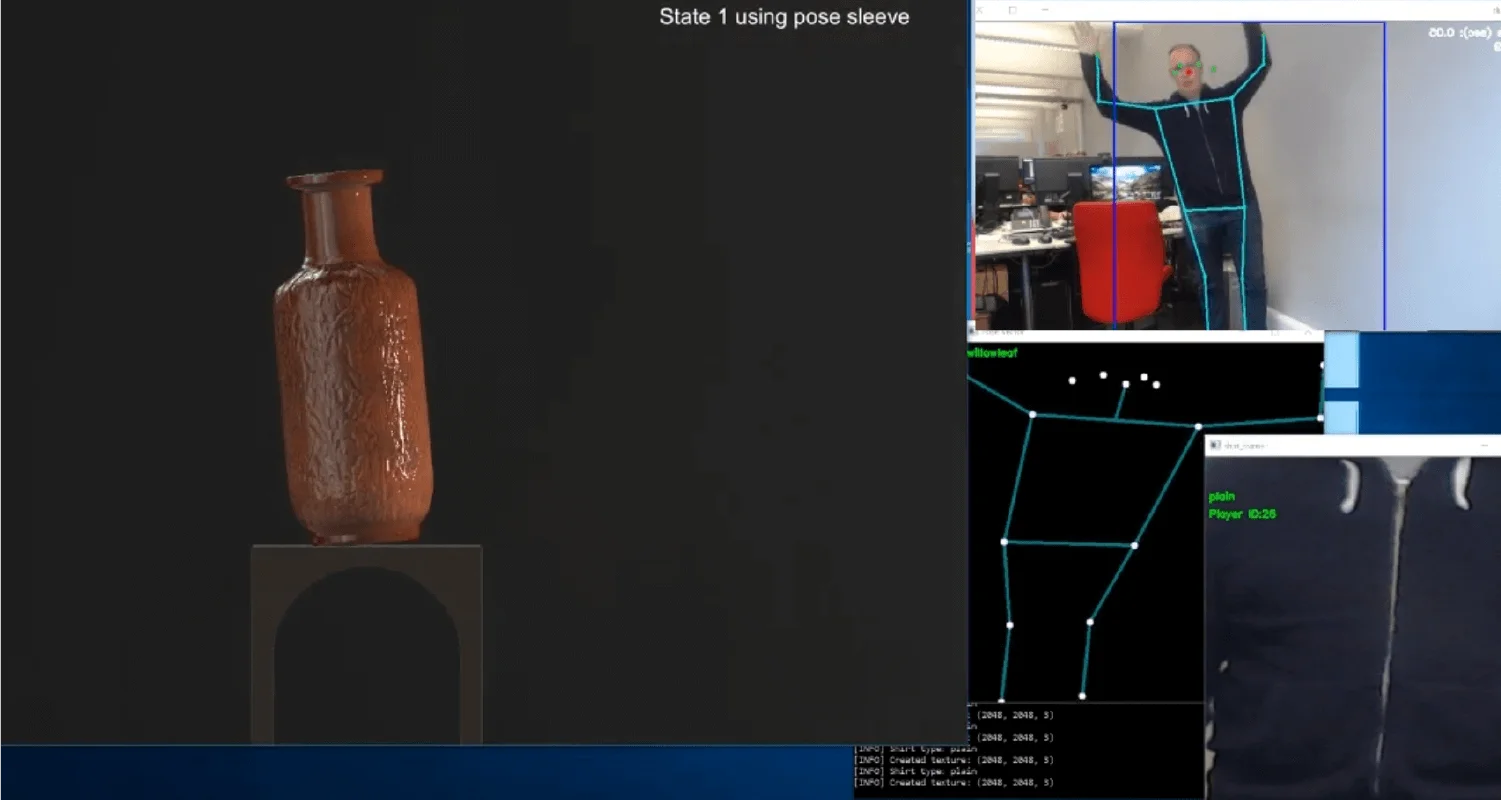

Morphing Clay was built with Future Deluxe and Bit Studio for Google, exhibited across two live events as part of a programme exploring the creative possibilities of AI. The premise was direct: participants stand in front of a screen, make gestures, and watch AI-generated clay forms respond and transform in real time.The interaction feels physical even though nothing physical is happening. That tension between gesture and digital material was the design intent from the start. We wanted people to feel like they were sculpting, not operating a screen.For Google, the experience needed to communicate a specific idea: that AI is a medium for human creativity, not a replacement for it. The clay metaphor does that work without requiring any explanation. You shape it. It responds to you.